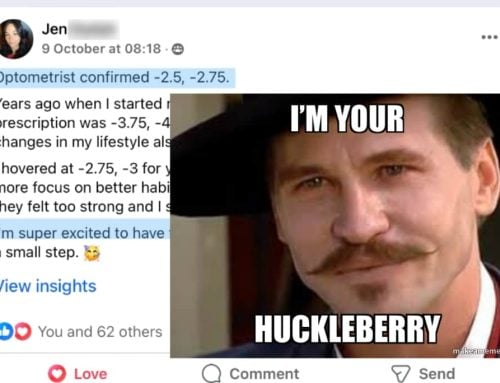

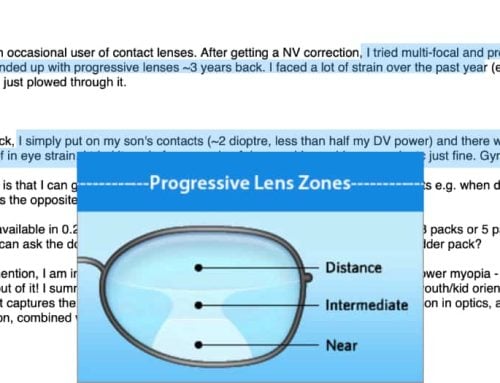

Got a fairly complex one-on-one case that’s in early evaluation phase.

Basically, measurements time. Lots and lots of measurements, throughout the day and activity dependent, so I get as much data as possible on the person’s day and effect on their eyesight.

It’s part 2, after part 1 being capturing the routines and schedule of the average day.

We try to get a nice usable snapshot of the baseline of what’s going on. We don’t want to change anything in beginning, just observe and have a platform from which we can test specific small adjustments.

A full plan on reversing myopia, built based on above.

So I used the latest pricy Claude Opus to see what it can do. Anonymized, just data. See first how good it is at spotting trends in the data, summarizing it, basically just being a lab assistant with simple stuff.

Second, what it see in terms of meaningful impacts of specific activities on data.

Third, if we go all in, what would be its advice.

tl;dr: It’s f*cking garbage.

Garbage.

It’s not even able to reliably summarize data. Horrid. I have no idea how this qualifies as any sort of magical awesome new tool that is going to change the world. Sure ok maybe it’s good at coding or whatever (I don’t code). Maybe this is the wrong model.

But it’s sh*t.

It can’t summarize. It can’t spot trends. It’s advice is straight up BAD.

This isn’t half usable or a good start or something to look forward to using more. It’s half talking parrot, half fortune teller.

What absolute trash.

It admits it, too:

claude opus 4.6 vision improvement advice

I DO want AI to get better, I’m one guy I can’t give everybody advice. Best case for all of us is having a million Jakes you can just spin up to give you perfect directions.

This isn’t it. I feel bad for people who use this and get crappy advice.

Maybe it can handle basic myopia, having scraped version 1 Website data. But you’re still better off taking a week or two of learning and doing that DIY.

Cheers,

-Jake